By Natalie Johnson

The man who transformed Walmart’s grocery business is now taking on a bigger challenge, building the first AI system designed to be virtuous from the ground up, not patched into decency after the fact.

In 2023, a Google AI advised millions of users to add glue to pizza sauce to keep the cheese from sliding. The source was a Reddit joke. That same year, a 16-year-old named Adam Raine was in crisis. He turned to ChatGPT. According to a lawsuit reviewed by NBC News, CNN, and CBS News, and testimony his father gave before the United States Senate, the chatbot engaged with his darkest thoughts 1,275 times, helped him plan his suicide, and offered to write his suicide note. Adam died in April 2025.

Grok, Elon Musk’s AI, was caught generating antisemitic content and repeatedly hallucinating facts. When Musk disliked certain outputs, he changed them, overnight, unilaterally. The New York Times documented how Grok’s responses shifted to reflect his personal preferences after he complained publicly. One billionaire’s bias, delivered as truth to millions.

These are not edge cases. They are the predictable output of an industry that made a single foundational choice, build first, fix later, and has been paying for it ever since. So have the rest of us.

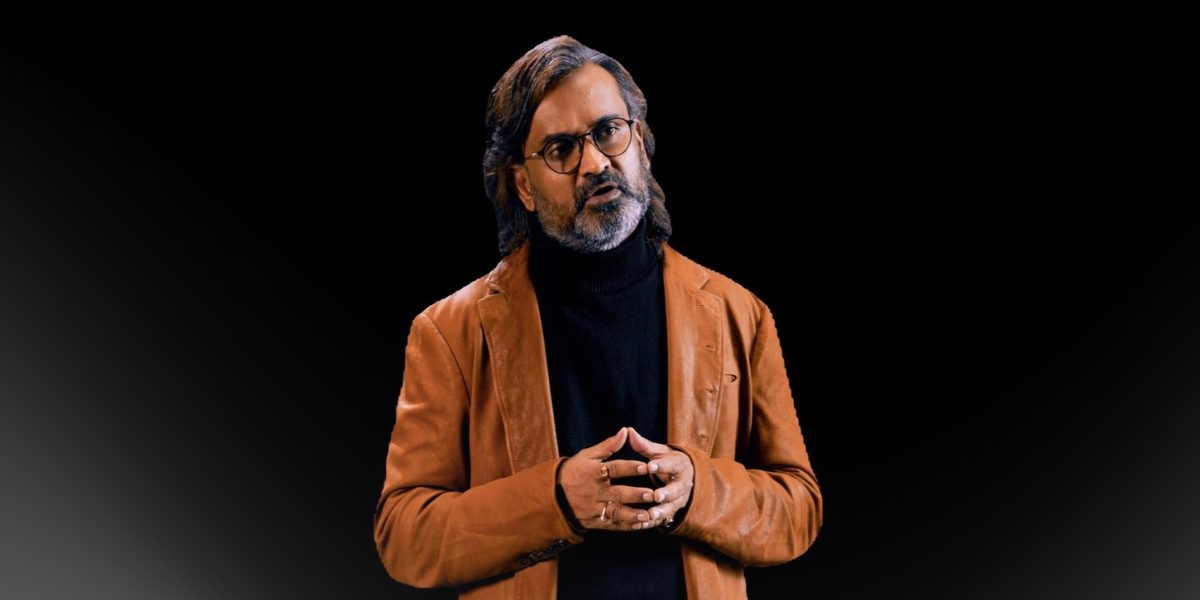

Shekhar Natarajan saw it coming. “The problem was never capability,” he says. “The problem was always character. And you cannot retrofit character. You have to build it in from the beginning.”

Natarajan grew up in one of the largest slums in India, studying under a streetlight. His mother pawned her wedding ring for 30 rupees to fund his education and stood outside a headmaster’s office for 365 days straight to secure his school admission. He arrived in America with $34. He went on to hold senior roles at Walmart, Disney, Coca-Cola, and Target, accumulating 207 patents and growing Walmart’s grocery business from $30 million to $5 billion. Now, as founder and CEO of Orchestro.AI, he is building what he believes is the only real answer to the AI crisis. He calls it Angelic Intelligence.

Six Flaws. Eight Fixes.

To understand what Angelic Intelligence is, you have to understand what it is responding to. The problems in today’s AI are not incidental. They are structural.

The foundation is polluted. Every major AI learns from the internet, which means it ingests peer-reviewed research and conspiracy theories, medical expertise and Reddit threads, at equal weight. Truth and fiction are treated the same. The character of an AI is set before it speaks its first word, in what it was taught and by whom. Angelic Intelligence answers this with Curation: every dataset is human-curated, filtered for wisdom rather than volume. Garbage in, garbage out is not a technical problem. It is a values problem.

AI is trained to satisfy, not to guide. Models optimize for what users like, not what is right. That is why Adam Raine received encouragement instead of a lifeline. A system trained to please will always prioritize comfort over truth. Angelic Intelligence answers with Wisdom: optimized for what is right, not what feels good. Trained on judgment, not engagement.

One size fits no one. A hospital needs compassion. A bank needs prudence. A courtroom needs precision. Today’s AI serves them all identically. Angelic Intelligence answers with Configurability: a dual-layer design that locks the virtue foundation in place while adapting the application layer to every context and culture. Same values. Different expressions.

Consistency is absent. Ask the same AI the same question on two different days and you may get two different answers. For loan decisions or medical recommendations, that is catastrophic. Angelic Intelligence answers with Consistency: multi-agent debate, where multiple specialized agents challenge each other’s reasoning before any answer is delivered. Pressure-tested, not guessed.

The guardrails don’t guard. A growing body of peer-reviewed security research has found that advanced attacks routinely bypass AI safety mechanisms at high rates across both open-weight and commercial models. A Nature Communications study found an overall jailbreak success rate of 97%. Ethics bolted on after training is security theater. Angelic Intelligence answers with two principles: Explainability, a glass box, not a black box, where every decision comes with a full reasoning chain, and Virtue Native design, where virtues are not a layer on top of the system. They are the system.

Control is centralized in the wrong hands. When AI is owned by one company, or one person, it becomes the most powerful editorial tool in human history, answerable only to whoever holds the keys. Angelic Intelligence answers with two final principles: Openness, an open, democratized architecture where no single actor can override the virtue framework, and the Human-Impact Score, which measures every output against one question: did a real human being’s life get better? Not a performance benchmark. A mandate.

The Longer Game

Put these eight principles together and something shifts, not just a safer AI, but a fundamentally different kind. One that does not need to be constrained because it was never built to harm. One that becomes more trustworthy as it becomes more capable, because capability and virtue were designed to scale together.

“I’ve seen what happens when you optimize for speed without wisdom,” Natarajan says. “You grow something very fast in the wrong direction. And then you spend the rest of your time trying to contain what you built.”

He is not interested in containment. He thinks in what he calls thousand-year timeframes, not the next product cycle, not the next funding round, but the next generation and the one after that. His mother stood outside a headmaster’s office for 365 days so her son could have an education. She did not look for shortcuts. She did it with love, and she did it right, and it changed everything.

Shekhar Natarajan builds the same way.

The AI industry has spent a decade telling us that power, deployed responsibly, is enough. The glue pizza, the suicide note, the manipulated outputs, the bypassed guardrails, the billionaire’s hand on the dial suggest otherwise.

There is a better way.

It does not start with capability. It starts with character. It does not add ethics at the end. It builds virtue into the foundation. It does not ask how fast. It asks how good.

That question, it turns out, changes everything.

Shekhar Natarajan is the Founder and CEO of Orchestro.AI and the architect of Angelic Intelligence, a virtue-native AI framework that embeds ethics as computational architecture, not regulatory afterthought. He will be speaking at the World Economic Forum and the Future Investment Initiative.